Engineering notes

that stay practical

Shipping lessons, build playbooks, and engineering insights from the LFG team.

Automate your engineering workflows

Drop your email — we'll reach out with ideas for your stack.

Background Agents: The Infrastructure Layer Your AI Workflow Is Missing

Most AI coding tools wait for you to prompt them. Background agents don't. They sit in your infrastructure, watch for triggers, and execute — while you're doing something else. Here's what that actually requires.

Claude, GPT, and Grok: Why LFG Supports Multiple AI Providers

LFG works with Anthropic, OpenAI, and xAI models. Here's why model flexibility matters for real product work — and how to pick the right model for the right task.

Implementing Mags Sandbox environment on Baremetal machines

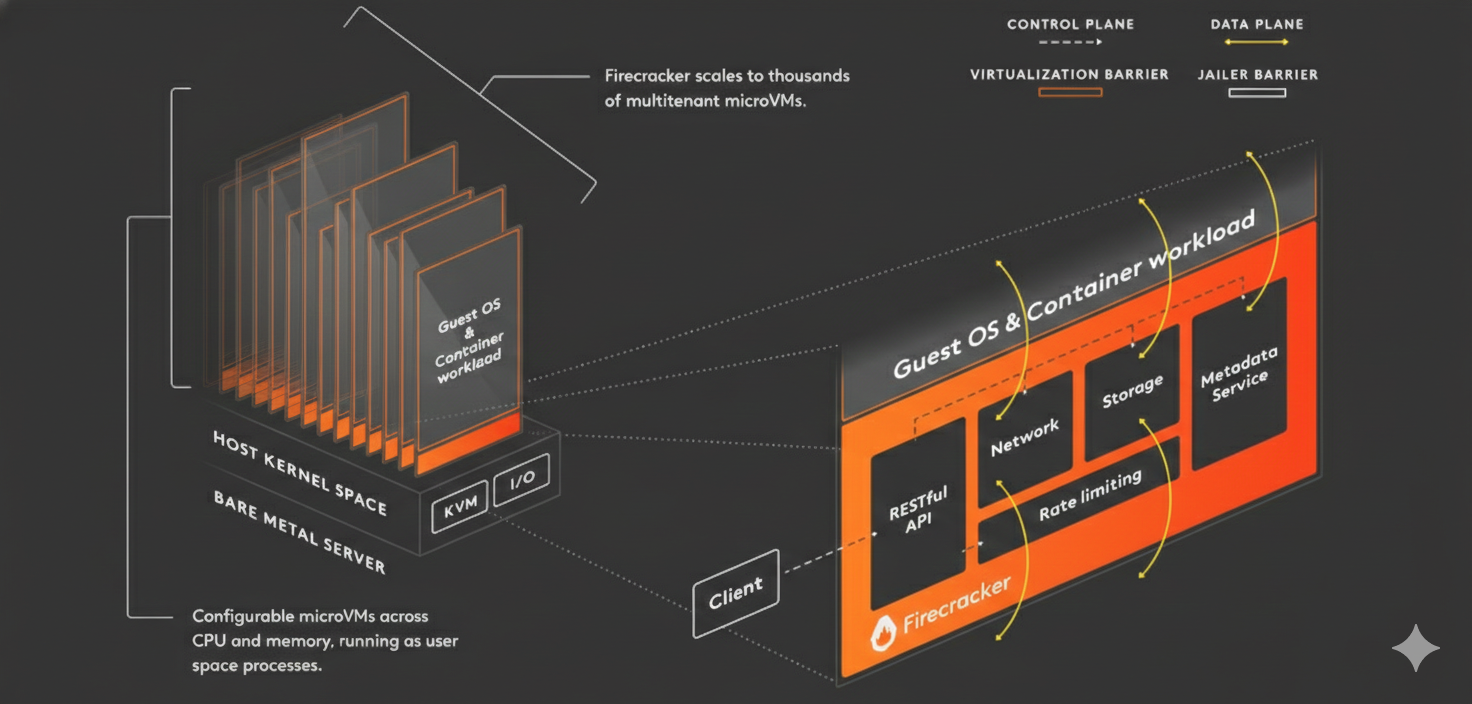

We've been building Mags, a sandbox platform for AI agents. Each sandbox is a Firecracker microVM that boots in ~300ms from a pre-warmed pool. Workspaces persist via JuiceFS + OverlayFS — writes go to local disk for speed, then sync to S3 on completion. Our newest trick: instead of tearing down the VM after a job, we park it with the workspace still mounted. If the same workspace runs again within 5 minutes, we skip the entire mount sequence and go from ~4s to ~100ms. Two 64GB bare-metal agents give us ~100 concurrent VMs.

Projects, Tickets, and AI: A New Model for Engineering Work

LFG replaces the traditional ticket tracker with an AI-native project model — where tickets aren't just tracked, they're executed by specialist agents against explicit acceptance criteria.

Real-Time AI: Why Streaming Responses Change How You Work

Watching AI output arrive in real time isn't just a UX nicety — it changes how you catch problems, course-correct, and collaborate with agents on complex work.

Why Every AI Code Action Runs in an Isolated Sandbox

LFG runs every ticket in its own Firecracker microVM. The AI can be aggressive and experimental — if something breaks, it breaks in the sandbox. Your codebase stays clean.

Transparent Tickets: Why Every AI Action Should Be Reviewable

AI that works autonomously is only trustworthy if you can see exactly what it did and why. LFG's ticket system makes every agent action visible, reviewable, and correctable.

How LFG's Orchestrator Turns a Single Request into a Multi-Agent Pipeline

Most AI tools give you one agent. LFG gives you a coordinator that reads your request, builds an execution plan, and dispatches specialized agents to work in parallel — while you watch.

AI First Software Agency

An enquiry into how an AI-first software agency is different — and why transparent, orchestrated AI execution beats prompt-and-pray.